Brazilian Journalist Verbally Assaulted Before Football Championship Final

March 10, 2026

Five More Journalists Sentenced as Belarus Intensifies Crackdown on Independent Media

March 10, 2026March 11, 2026 – General –

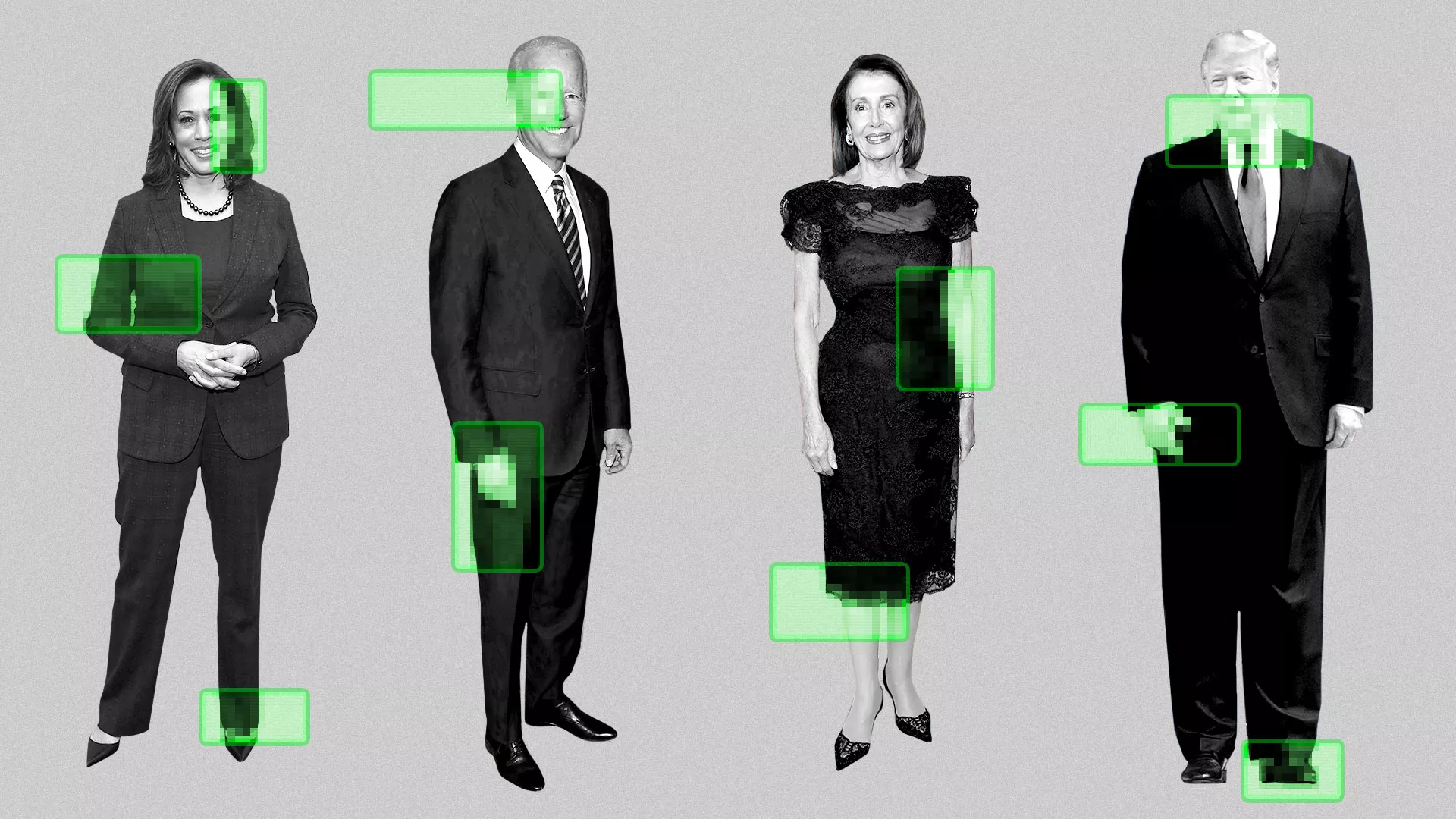

YouTube has introduced a new initiative aimed at protecting journalists, politicians, and government officials from AI-generated impersonation by expanding its deepfake detection technology to these groups. The move reflects growing concern about the misuse of artificial intelligence to create realistic fake videos that can spread misinformation or damage reputations.

The technology, known as “likeness detection,” analyzes videos uploaded to the platform to identify AI-generated content that imitates a person’s face or appearance. The system works similarly to YouTube’s copyright protection tool Content ID, but instead of identifying copyrighted audio or video, it scans for simulated faces produced using generative AI tools.

Under the expanded program, eligible participants such as journalists, government officials, and political candidates can submit verification materials, including identification and a video recording. Once enrolled, the system monitors uploaded videos for content that appears to replicate their likeness using artificial intelligence. If suspicious content is detected, participants can review it and request removal if it violates YouTube’s policies on privacy or impersonation.

YouTube officials say the expansion focuses on individuals who play a prominent role in public discourse, where deepfakes could have serious consequences. Company representatives noted that AI impersonation poses heightened risks for journalists and political figures because manipulated videos can mislead audiences, distort political debates, or undermine trust in news reporting.

The initiative builds on a pilot program introduced in 2025 that initially allowed some creators in the YouTube Partner Program to detect deepfake videos using their likeness. The new phase broadens access to include journalists and public officials whose identities could be exploited in misinformation campaigns.

Despite the rollout, YouTube says the number of takedown requests generated through the system so far has been relatively small. Many flagged videos were determined to be benign or creative uses of AI rather than harmful impersonations. However, the company expects the need for such protections to increase as generative AI tools become more advanced and accessible.

The initiative is part of broader efforts by technology companies and lawmakers to address the growing problem of synthetic media and deepfakes, which have increasingly appeared in political campaigns, online scams, and disinformation campaigns around the world.

YouTube has indicated that the technology may eventually expand further to detect AI-generated voices and other forms of digital impersonation, reflecting ongoing efforts to maintain trust and authenticity in online video platforms.

Reference –

https://www.axios.com/2026/03/10/youtube-deepfake-detection-journalists-politicians

YouTube expands AI deepfake detection to politicians, government officials, and journalists